AI can write code. It just can't maintain it — About the future of creative work

A new academic benchmark shows AI coding agents degrade with every iteration. Here's what that tells us about where AI genuinely replaces human work, and where it doesn't.

A research paper published last week by teams from the University of Wisconsin-Madison, MIT, and Washington State University tested 11 of the best AI coding agents on a deceptively simple challenge: write a program, then keep improving it over time as requirements change.

No agent completed a single problem end-to-end. The best checkpoint solve rate across all models was 17.2%.

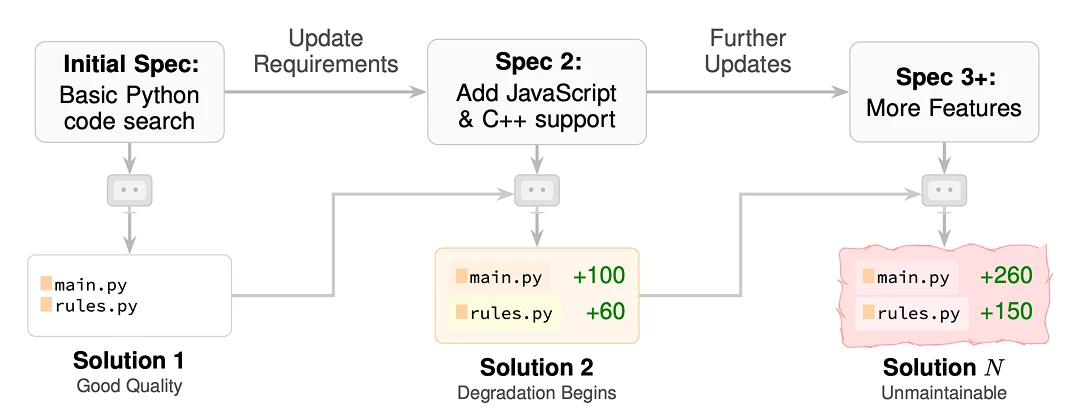

More interesting than the failure rate was how the agents failed. They didn't crash or produce broken code. They passed the tests at each step. They just produced code that became progressively worse with every iteration: more verbose, harder to extend, increasingly concentrated into massive functions that were technically correct but structurally unmaintainable. The researchers called this accumulation "slop."

The benchmark is called SlopCodeBench, and its findings say something important about software engineering and the nature of AI work in general.

In software engineering, slop is code that works but was written without thinking about what comes next. It solves the immediate problem and ignores the consequences. Each patch makes the next patch harder. Over time, the codebase becomes a liability: technically functional, practically unmaintainable.

The SlopCodeBench researchers found that AI agents produce slop at a measurable and consistent rate.

Agent-generated code is 2.2x more verbose than equivalent human-written code. Structural erosion — the concentration of complexity in functions that become too large to safely modify — rises in 80% of agent trajectories. Verbosity rises in 89.8%.

Human code in the same conditions stayed flat. AI code degraded with every iteration.

The researchers also tested whether better prompting could fix this. It helped initially — better prompts produced cleaner first drafts. But it didn't prevent degradation. By the later iterations, prompted and unprompted agents converged on the same structural problems.

Why this happens

The root cause isn't that AI is bad at writing code. It's that AI optimizes for the present moment without carrying judgment about the future.

When an AI agent receives a new requirement, it asks:

what's the fastest way to make this work given what already exists?

It patches. It extends. It adds branches to functions that already have too many branches, duplicates logic because duplication is faster than refactoring. Each individual decision is locally reasonable. The cumulative effect is a codebase that nobody (human or AI) can safely modify.

This is the distinction the paper forces into focus: the difference between execution and judgment. AI executes well. It generates, it produces, it passes tests.

What it lacks is the architectural instinct that tells an experienced developer: this shortcut will cost us three times as much in six months. Stop. Refactor now.

That instinct isn't a technique. It's not something you can put in a prompt. It comes from having lived through the consequences of cutting corners.

The same pattern repeats outside of code

Software development is just the domain where this is easiest to measure. The same dynamic plays out everywhere AI is used for iterative, consequential work.

In marketing, an AI can write a campaign brief, generate variations, and produce content at scale. What it can't do is notice that the brand voice has been drifting for three months, that the messaging has quietly shifted away from what actually resonates with the core audience, that the short-term optimization for clicks is eroding the long-term trust that makes the audience come back.

That requires someone who has been paying attention across the whole arc, not just the current task.

In design, AI can generate assets that look right individually. What it struggles with is coherence over time.

Maintaining the visual logic of a brand across dozens of outputs, in different formats, produced by different people using different tools. That coherence isn't in any single file. It lives in the judgment of whoever is directing the work.

In any creative discipline, the pattern is the same: AI handles the execution well. The judgment about what to execute, when to change direction, and what the cumulative effect is adding up to: that remains stubbornly human.

What this means for the "AI will replace everyone" argument

The SlopCodeBench findings complicate the simple narrative that AI will replace knowledge workers wholesale. They suggest something more nuanced:

AI replaces the parts of work that can be evaluated in isolation. It struggles with the parts that can only be evaluated in context — across time, across iterations, with awareness of what the whole thing is supposed to become.

The developers who get displaced first are the ones whose value was primarily in execution: writing boilerplate, converting specs into working code, producing output to a defined standard. The ones who remain valuable are the ones whose contribution was always the judgment layer — the architects, the senior engineers who knew when not to ship, the people who could look at a technically passing codebase and tell you it was heading toward collapse.

This isn't a comfortable argument for anyone. The execution layer is where most people start. But the direction it points is clear: the durable value in knowledge work has always been in the judgment, not the output.

The SlopCodeBench paper is a benchmark about what AI is actually good at versus what it merely appears to be good at.

AI appears to be good at maintenance. It passes the tests and produces output at every checkpoint. But the output quietly degrades. The quality is invisible in any single step and only visible across the whole trajectory. That's worth keeping in mind as AI tools become standard across every discipline. The question isn't whether AI can produce a result — it can, it will and the result will often look fine.

The question is who is watching the trajectory. Who is noticing the drift. Who is making the call that the current direction needs to change before the accumulated slop becomes impossible to reverse.

That person is not getting replaced.

If anything, they're becoming more valuable — because there are now more outputs to watch, more trajectories to track, and fewer people paying attention to the whole.

If you're a creative professional using AI tools for production work — video, image, audio, marketing assets — Artificial Studio is built around that model: AI handles the execution, you direct the output.